The artificial intelligence landscape remains murky when it comes to accountability, transparency and capabilities for many organizations, as shown in ISACA’s 2026 AI Pulse Poll.

The global pulse poll, reflecting responses from more than 3,400 digital trust professionals across IT audit, governance, cybersecurity, privacy and emerging technology roles, finds that even as AI usage accelerates across the enterprise landscape, there appears to be limited human oversight over AI decision-making, little disclosure around AI use, and uncertainty around AI security incident response and accountability for AI system harm.

Below are five sneak-peek findings from the 2026 AI Pulse Poll. The full 2026 AI Pulse Poll from ISACA will be released in early May.

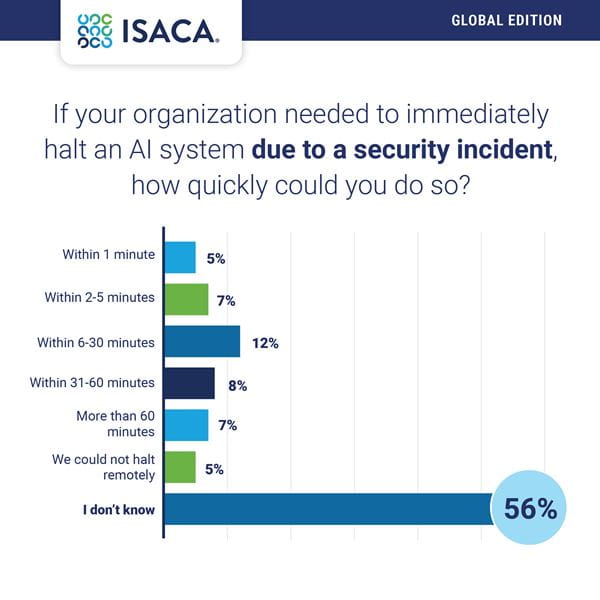

Putting the brakes on AI is no simple task. Time is of the essence when security incidents strike, making the lack of confidence respondents feel in being able to swiftly stop AI systems, if needed, a potentially major problem. More than half (56 percent) indicate they do not know how quickly they could halt an AI system due to a security incident if needed. Thirty-two percent believe they could halt it within 60 minutes, and 7 percent say it would take them more than 60 minutes.

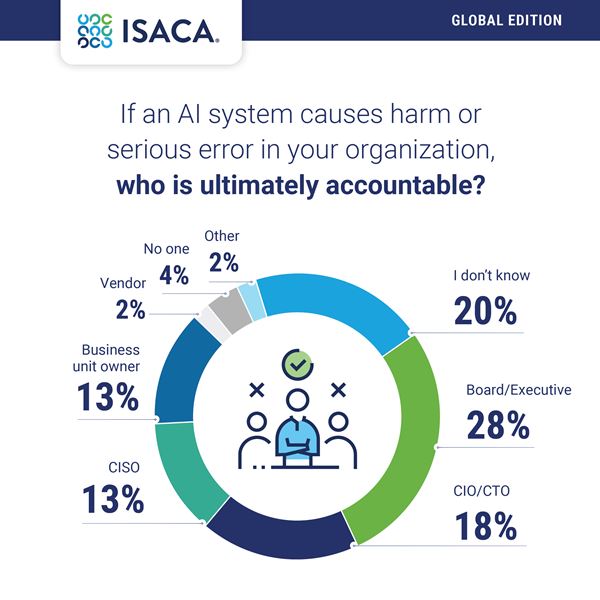

Mixed views on ultimate AI responsibility. More than one-quarter of respondents (28 percent) point to their board/executives when it comes to ultimate responsibility. Another 18 percent believe that their CIO/CTO (18 percent) would be responsible, 13 percent assign the responsibility to their CISO and 20 percent do not know where the responsibility would lie.

Meanwhile, ISACA Now blog author ShanShan Pa highlights the value of a shared responsibility model for AI.

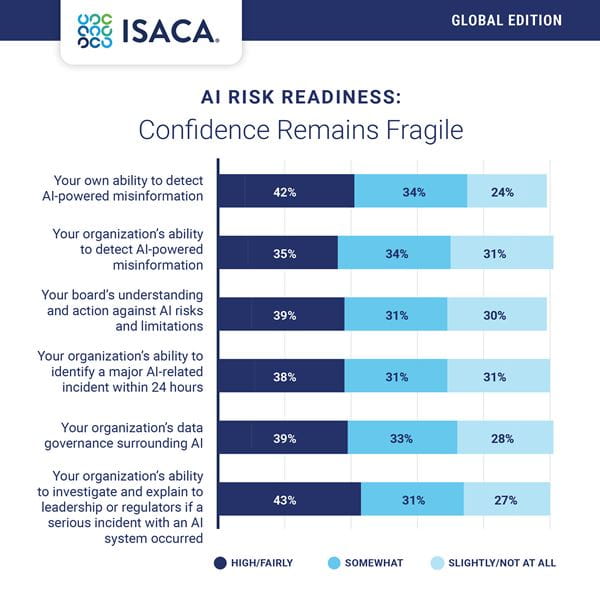

Confidence is lagging in explaining AI incidents. AI security and privacy incidents are becoming prevalent, often falling into predictable patterns, but less than half of Pulse Poll respondents (43 percent) are completely or fairly confident in their organization’s ability to investigate and explain to leadership or regulators if a serious incident with an AI system occurred, while only 39 percent are completely/fairly confident in their organization’s data governance around AI.

Many AI-generated actions are taking place without human oversight. Only 36 percent of Pulse Poll respondents say that humans approve most AI-generated actions before execution and 26 percent report that humans review selected decisions or patterns after execution. Additionally, 11 percent say that humans intervene only when alerted to potential issues and 20 percent say they do not know how humans oversee AI decision-making at their organization.

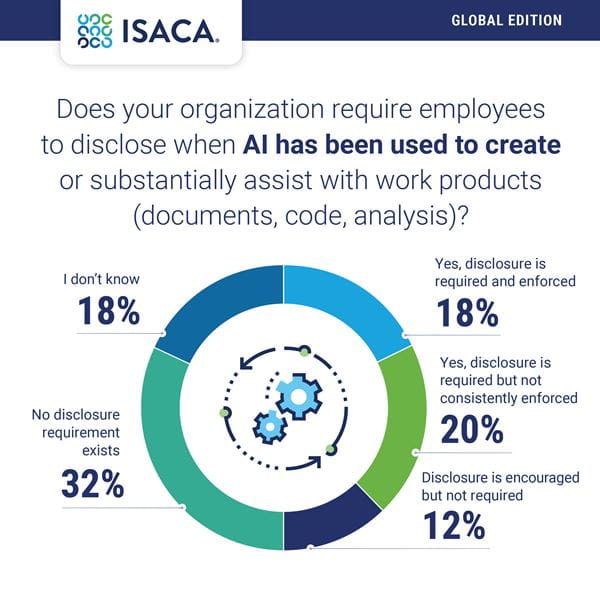

Uncertainty lingers about transparency. Organizations often struggle to explain where, why and how they are leveraging AI. “Many enterprises with advancing AI programs have articulated governance principles, like fairness, transparency, accountability, and human oversight but they struggle to put them into the operational policies, workflows, and controls that govern day-to-day AI deployment,” writes Keith Bloomfield-DeWeese.

The Pulse Poll underscores those concerns. Only 18 percent of respondents indicate that disclosure is required and enforced if AI has been used to create or substantially assist with work products, while 20 percent say that disclosure is required but not consistently enforced. Another 32 percent note that no disclosure requirements exist.

See more insights from ISACA’s 2026 AI Pulse Poll in May. For more AI resources from ISACA, visit www.isaca.org/ai.