In today’s modern technological landscape, countless industries and organizations are adopting artificial intelligence (AI) and machine learning (ML) technologies. For this reason, model development and data management are high-risk areas that organizations must address. Robotic process automation (RPA) risk management principles can aid in implementing processes that accelerate governance programs for these emerging technologies due to the similarities that RPA shares with the development of AI and ML models. Organizations looking to bolster their defenses should look to RPA risk management principles to mitigate model development and data management risk associated with AI and ML.

AI and ML Model Development and Data Management Risk

Two of the most critical inputs to AI and ML technologies include the type of model and the data utilized to train the model. Both of these inputs can significantly influence the output generated by AI and ML technologies. When adopting these technologies, it is essential to carefully consider the high-risk processes of model development and data management.

To better appreciate model development and data management risk, it is important to understand how AI and ML models are built and developed. At the heart of any AI and ML technology is a model that generates output for users. There are various types of AI, such as neural networks and large language models (LLMs). Examples of ML models include linear regression, classification, and random forests. Each model serves specific use cases and has its own unique method for validating accuracy. Thus, selecting the appropriate model for the intended use case is crucial for an organization.

Once the appropriate model has been selected, the model is developed and trained using the training data. Cybersecurity and compliance teams can perform a risk assessment of an organization’s AI and ML model development and data management processes based on the model utilized and its underlying training data.

When performing a risk assessment of an organization’s AI and ML model development process, there are several important questions for organizations to consider:

- Does the organization document requirements, training data sources, expected outputs, and model validation methods for AI and ML models prior to starting development? Failure to document project details related to AI and ML model development could thwart the necessary business requirements for these technologies (i.e., Risk 1).

- Does the organization have a process to evaluate the business requirements and select the best AI and ML model for the use case? Failure to evaluate the business requirements and select the best AI and ML model for the use case could cause the model to generate erroneous output that may disrupt operations and cause financial losses (i.e., Risk 2). An example of a model that may generate erroneous output could be using a simple linear regression (e.g., Ordinary Least Squares [OLS]) to predict inventory replenishment levels when a time series model (e.g., Autoregressive Integrated Moving Average [ARIMA]) may be more appropriate based on historical replenishment patterns.

- Are the duties related to the model development process segregated across those involved with the project? For example, are the individuals who develop the models different from those who train and validate the models? Failure to enforce segregation of duties in the model development process could introduce bias (e.g., sample bias), which could cause the model to generate erroneous output that may disrupt operations and cause financial losses (i.e., Risk 3). For example, bias may arise when training a model on a data source that is not representative of the population of data (e.g., using inventory purchase data from only one of the organization’s vendors to train an inventory replenishment model for the entire organization).

- Does the organization review and approve trained AI and ML models prior to deploying them? Failure to review and approve trained AI and ML models prior to deployment could result in deploying a model that generates erroneous output or does not meet business requirements (i.e., Risk 4).

When performing a risk assessment of an organization’s AI and ML data management process, some questions organizations should ask include:

- Does the organization have an inventory of data sources that can be used for AI and ML models? Failure to document data sources used to train AI and ML models could result in extended resolution times when organizations encounter issues or failures with the operation of these models (i.e., Risk 5).

- Does the organization restrict access to data sources that can be used for AI and ML models? If so, is the access reviewed periodically? Failure to restrict access to data sources used to train AI and ML models could result in unauthorized changes to underlying training data. With these changes undetected and unvalidated, the altered training data may cause a model to generate inaccurate or biased output (i.e., Risk 6). The impact on model output from unauthorized changes to training data could be unpredictable and pervasive.

- Does the organization have data integrity controls that maintain the completeness and accuracy of data sources used in AI and ML models? Failure to establish data integrity controls over data sources used in AI and ML models could result in models being trained on incomplete or inaccurate data, which could impact output (i.e., Risk 7).

- Does the organization routinely backup and recover data sources used in AI and ML models? Failure to back up and recover data sources used in AI and ML models could result in extended resolution times when organizations encounter issues or failures with the operation of AI and ML models (i.e., Risk 8).

RPA Risk Management Principles

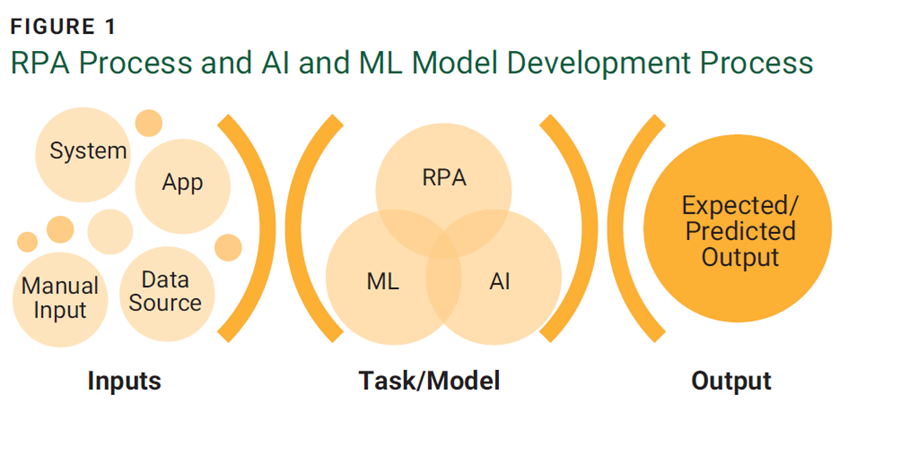

Robotic process automation (RPA) is an emerging technology that seeks to automate repetitive, low judgement tasks performed by humans. The tasks may involve several systems, applications, and other inputs that generate an expected output. RPA accomplishes automation through the use of bots that utilize all inputs to carry out the tasks and generate the expected output. This is similar to the process of developing an AI and ML model where the inputs (training data) are identified and used to train an appropriate model that generates output. Figure 1 depicts the similarities between the RPA process and the AI and ML model development process.

Given the similarities, organizations can leverage three RPA risk management principles1 to mitigate risk associated with AI and ML model development and data management:

- RPA development, change management, and operation—RPA requires appropriate change control and operational procedures related to the development, testing, approval, and deployment of bots.2 This is especially important in organizations with developers who are unfamiliar with appropriate change control.3

- RPA credential management—RPA bots usually have accounts that grant them access to different systems and data sources to perform their automation. It is important to document all accounts provisioned to bots, restrict the permissions provided to these accounts, and review the access periodically.4

- RPA system and data dependencies—RPA bot systems and data dependencies should be documented.5 This is important as it can help with resolving RPA errors and failures that might arise.6

Application of RPA Risk Management Principles

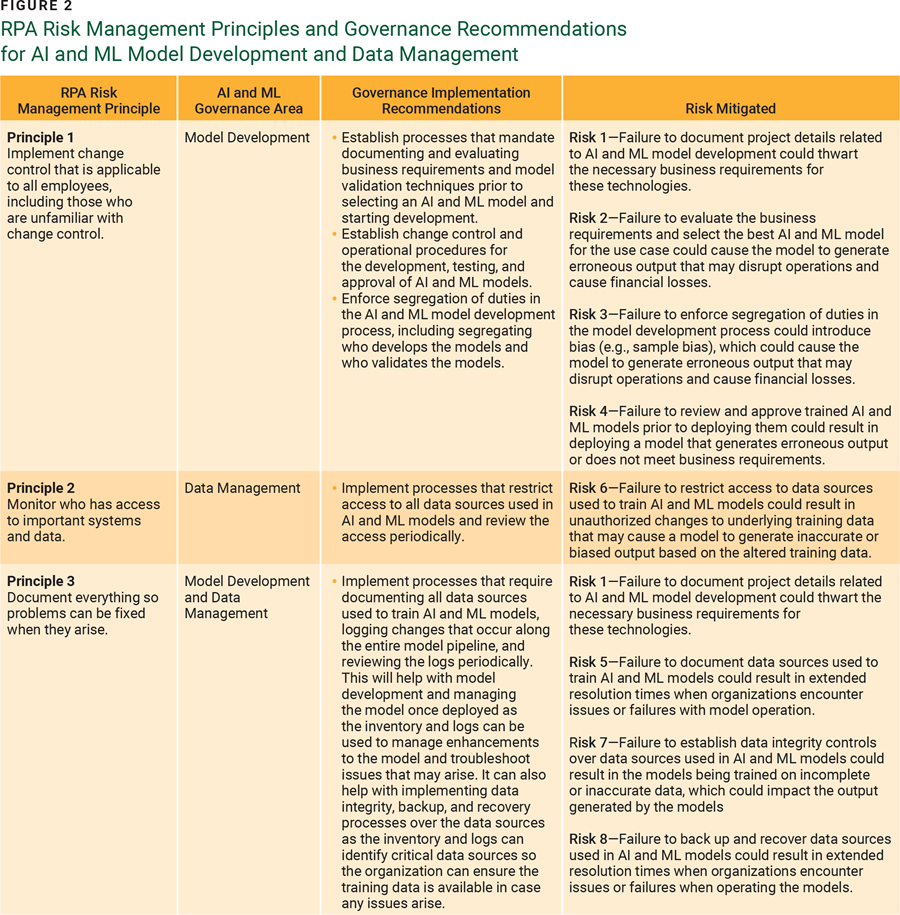

Organizations can leverage RPA risk management principles to mitigate model development and data management risk in several ways:

- Implementing change control that is applicable to all employees, including those who are unfamiliar with change control, can mitigate model development risk. Due to the dependencies of RPA and the scope of users (e.g., citizen developers), RPA change control and operational procedures are important parts of mitigating risk. AI and ML model development pose a similar risk to organizations through the dependencies and scope of users. By applying this principle, organizations can implement several tactics to mitigate model development risk:

- Establish processes that mandate documenting and evaluating business requirements and model validation techniques prior to selecting an AI and ML model and starting development.

- Establish change control and operational procedures for the development, testing, and approval of AI and ML models.

- Enforce segregation of duties in the AI and ML model development process, including segregating who develops and validates the models.

- Monitoring who has access to important systems and data can mitigate data management risk. RPA bots interact with multiple systems, each requiring its own account, so credential management is important. AI and ML data management poses a similar risk to organizations through access to the underlying training data. By applying this principle, organizations can implement processes that restrict access to all data sources used in AI and ML models and review access permissions periodically.

- Documenting everything allows problems to be fixed when they arise, mitigating model development and data management risk. RPA relies on several systems and data dependencies, thus documenting all of them is critical to resolving RPA errors and failures. Similarly, AI and ML model development and data management pose comparable risk to organizations through their reliance on systems and dependencies. By applying this principle, organizations can implement processes that require documenting all data sources used to train AI and ML models, logging changes that occur along the entire model pipeline, and reviewing the logs periodically. This will help with model development and management once the model has been deployed as the inventory and logs can be used to manage enhancements to the model and troubleshoot issues that may arise. It can also help implement data integrity, backup, and recovery processes for the data sources. The inventory and logs can be used to identify critical data sources so the organization can ensure that the training data is available in case any issues arise.

Figure 2 depicts how RPA risk management principles can be used to mitigate risk associated with AI and ML model development and data management.

Conclusion

RPA risk management principles such as implementing change control, monitoring access, and documenting everything can be used to help navigate emerging technologies and accelerate governance programs. The key is to learn about the disruptive technology and determine how to apply existing risk management principles to new forms of risk. By taking an incremental approach to implementing RPA risk management principles, organizations can make new technologies seem less daunting, protect valuable data, and maintain consumer trust.

Endnotes

1 Hong, B.; Ly, M.; et al.; “Robotic Process Automation Risk Management: Points to Consider,” Journal of Emerging Technologies in Accounting, vol. 20, iss. 1, 2023

2 Hong; “Robotic Process Automation”

3 Hong; “Robotic Process Automation”

4 Hong; “Robotic Process Automation”

5 Ly, M.; Lin; “The Internal Audit Digital Associate: A Primer to Help Automate,” Internal Auditing, 2021

6 Ly; “The Internal Audit”

MICHAEL LY, CISA, CPA

Is an audit and risk management professional with experience in financial services, consumer products, technology, and healthcare. He specializes in data science, IT governance, and automation.

YUE HONG, PH.D., CFA, CPA, CFA

Is an assistant professor of accounting at DePaul University (Chicago, Illinois, USA). Her research focuses on technology and its impact on human decision making and well-being.