By now, the story of the unintended actions of AI and its impact on a major airline is a well-known cautionary tale: An Air Canada flight passenger was awarded more than CAD$800 in damages and court fees after the airline’s AI-powered chatbot erroneously informed the passenger that bereavement airfare could be applied retroactively to travel that had already taken place. Although Air Canada’s official policy states that bereavement fares cannot be applied retroactively, the Tribunal in Canada ruled that Air Canada was responsible for the information provided by the chatbot on its website and ruled in favor of the passenger.1

Writing on his decision, one of the Tribunal members stated, “It should be obvious to Air Canada that it is responsible for all the information on its website. It makes no difference whether the information comes from a static page or a chatbot.”2

When it comes to the courts—including the court of public opinion—the verdict is clear: Organizations are responsible for the outputs and decisions of their AI systems, whether they claim to be or not. Although many organizations are still grappling with how to handle AI accountability, the good news is that establishing accountability mechanisms can be fairly straightforward, especially when leveraging governance frameworks that are already available.

A Lack of Accountability

It might seem that experiences such as Air Canada’s would send other organizations scrambling to establish robust AI governance structures with clearly defined roles and responsibilities; however, according to at least one survey, that has not necessarily been the case.

According to the 2024 AI Benchmarking Survey, a joint project of ACA Group’s ACA Aponix and the National Society of Compliance Professionals (NSCP), many financial services organizations lack formal AI governance frameworks, testing protocols, and third-party oversight.3 The survey found that only 32% of respondents have established an AI committee or governance group; only 12% of those using AI have adopted an AI risk management framework; and just 18% have established a formal testing program for AI tools; while 92% have yet to adopt policies and procedures to govern AI use by third parties or service providers.4 Without these mechanisms in place, organizations do not have the ability to develop and deploy AI systems responsibly, nor to be accountable for the decisions those systems make.

This lack of accountability does not necessarily emanate from recklessness as one might imagine. More likely, individuals and teams are working on AI projects in good faith, but at least some of the following symptoms are present:

- There is a lack of coordination of AI development efforts.

- There is a lack of documentation of roles, responsibilities, and development procedures.

- There is not a shared understanding of the purpose for the organization’s use of AI.

- There is a knowledge gap around legal and compliancerisk.

- There is no proactive consideration of explainability and societal impacts.

These deficiencies are not terribly difficult to overcome within the context of a robust AI risk management framework, but enterprises that utilize AI in any form must realize that the stakes associated with lax controls are only rising as AI becomes increasingly integrated into business and everyday life.

Regulatory Compliance Risk

Accountability for AI use is increasingly important as regulation around AI continues to take shape and expand. Organizations must account for, explain, test, and control their AI systems to avoid exposure to legal, reputational, and financial harm.

Regulatory non-compliance is a significant risk on its own, but there are also actions that customers (or prospective customers), out of concern for their own reputations, are asking organizations to take to demonstrate responsible AI practices. In 2024, as AI continued to receive increasing attention, there was a surge in enterprises responding to questionnaires from customers and business partners about their responsible use of AI.5 The ideal response to such questions is to affirm compliance and alignment with regulations and leading governance frameworks.

EU AI Act

Like the role the EU General Data Protection Regulation (GDPR)6 played in privacy regulation, the EU AI Act, which went into effect 1 August 2024,7 will set the tone for AI regulation around the world. The Act takes a risk-based approach to determining regulatory requirements for entities that deploy AI systems. Specifically, the Act divides these entities into four buckets based on their use case:

- Unacceptable risk—Prohibited uses (e.g., social scoring systems, manipulative AI)

- High risk—Uses with potential impact to human health and safety, personal liberty, or society (e.g., medicine, public transport, law enforcement)

- Limited risk—Systems that interact with end users (e.g., chatbots and deepfakes) without the potential impacts of high-risk systems

- Minimal risk—AI systems that do not impact, or interact with, end users (e.g., AI-enabled video games, spam filters)

US State Legislation

While there is currently no overarching federal regulation of AI in the United States, several states have passed laws attempting to place guardrails around AI and many more laws are in various stages of development. Echoing the risk-based nature of the EU AI Act, early efforts to regulate AI in the United States have focused primarily on AI systems that interact with humans and/or make decisions that have the potential to impact humans. For example, the State of Utah’s AI Policy Act, enacted 1 May 2024, is the first law in the United States that imposes disclosure requirements on organizations that use generative AI when interacting with consumers.8

Begin With Governance

The straightest path to ensuring regulatory compliance and minimizing legal, financial, and reputational risk associated with AI is to adopt an established AI governance framework. The US National Institute of Standards and Technology (NIST) AI Risk Management Framework (RMF)9 and International Organization for Standardization (ISO)/International Electrotechnical Commission (IEC) 4200110 standard are two of the leading forms of guidance. The Organisation for Economic Co-operation and Development (OECD) AI principles11 also serve as a model of what responsible AI looks like.

NIST AI Risk Management Framework

NIST’s AI RMF is a voluntary resource for organizations designing, developing, deploying, or using AI systems to manage AI risk and promote trustworthy and responsible AI. It is intended to be broad, unspecific, and revised over time so that organizations can adapt it to their unique needs. Unlike the EU AI Act, which bases regulatory obligation primarily on use case, the NIST AI RMF is intended to be use case-agnostic.

ISO/IEC 42001

ISO/IEC 42001 is an international standard for establishing, implementing, maintaining, and continually improving an AI management system (AIMS) within organizations. It is the world's first international standard on AIMS.

ISO certification is globally recognized as a symbol of safety, quality, and reliability. The ISO/IEC 42001 standards include requirements for a broad range of topics relevant to the AIMS:

- Organizational strategy and AI governance

- AI risk management

- Data privacy

- Responsible AI system development and deploymentpractices

- Data quality monitoring

- Human rights

- Fairness and other ethical considerations

- Environmentalimpact

- Human oversight over AI systems

OECD AI Principles

The OECD AI principles guide key AI players in their efforts to develop trustworthy AI and provide policymakers with recommendations for effective AI policies. The principles are:

- Inclusive growth, sustainable development, and well-being

- Human rights and democratic values, including fairness and privacy

- Transparencyandexplainability

- Robustness, security, and safety

Before formal adoption or application of a governance framework begins, however, there are more basic steps that organizations can and should take. Exploring these key areas will establish a foundation of AI accountability and ensure that the organization is prepared for success if and when it moves forward with formal framework adoption.

Who Should Be Accountable for AI?

As with other areas, such as information security or ethical culture, accountability for AI can be understood at two levels. On one level, it is the responsibility of everyone in the organization to use AI responsibly. However, it is also critical to understand and identify who the key stakeholders are in AI governance so that the necessary leadership is established.

Stakeholders in AI Governance

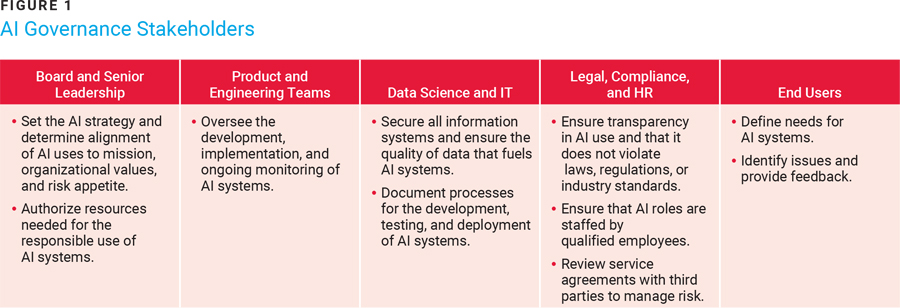

There are a broad range of stakeholders throughout the organization who play key roles in AI governance. Figure 1 summarizes the most critical roles. It might surprise some to see end users included in the discussion of governance roles, but end users are important stakeholders. Not only because their needs drive the purpose of the AI system but also from a governance perspective, because standards such as ISO/IEC 42001 include the organization’s consideration of end user (i.e., customer) needs as a requirement.

When the key stakeholders have been identified, the next step is to bring leaders from these areas together to form a leadership team or steering committee. An AI steering or management committee, composed of cross-functional, senior level leaders, is vital to the implementation of an effective AI governance structure and represents the kind of specific accountability that is required by AI regulations and standards of practice.

Transparency and Explainability of AI Uses

Across the board, AI regulations and governance frameworks (not to mention consumers) demand that enterprises explain the nature, purpose, and boundaries of their use of AI. At organizations where this description is murky, effort must be invested in crafting an accurate, transparent description of why AI is used (i.e., business justification) and how it will not be used (i.e., no harm, no black boxes, etc.).

Policies and Procedures

Defined norms and expectations in the form of documented policies and procedures will pave the way for AI accountability. The number and type of policies needed may vary by organization type and use of AI, but there are some core policies and procedural documentation that must be in place, such as:

- An AI policy

- Public disclosures of AI use and points of interaction with AI, if applicable (i.e., transparency)

- An inventory of AI uses (including applications, models, and technology resources)

- A technical specifications repository

- AI system data flows

- AI development and deployment process documentation (including testing and sign-off requirements)

- Risk assessment and impact assessment procedures, results, and action plans

Once key stakeholders have been identified and acknowledged, and the organization’s AI uses and processes have been documented for transparency, then the organization will be well situated to comply with existing (and forthcoming) legislation and adopt a formal AI governance framework if it chooses to do so.

Conclusion

It is difficult to predict how AI may be used in the future. New applications are continually emerging, and as with past technological advancements, the bounds of what is considered acceptable and unacceptable use are likely to shift as AI becomes more integrated in society. However, regardless of how AI is applied, it is safe to say that transparency will continue to be foundational to any effort to regulate it. Organizations that want to pursue AI opportunities both aggressively and responsibly should focus on documentation and explainability. It is critical to ensure that these aspects are baked into the AI development process.

The same considerations apply to slower adopters of AI. They should understand that sooner or later they will have to demonstrate that they are accountable for their AI uses and developing that accountability retroactively could be a painful process. Therefore, future decisions in AI investment, regardless of how large, small, fast, or slow, should be accompanied by answers to questions such as:

- Who may be impacted by AI?

- Can we justify AI in the context of our organization’s mission and core values?

- Can we explain AI?

- Do we explain AI to people who need to know?

Organizations that have policies in place to govern AI use in the organization, clearly defined roles and responsibilities, shared understanding of the use case in the context of the regulatory landscape, and processes for human monitoring of AI systems are well on their way to effective AI governance. Enterprises that lack these things must get started sooner rather than later, because regulatory requirements and customer demand for AI transparency are on the rise.

Endnotes

1 Garcia, M.; “What Air Canada Lost in ’Remarkable’ Lying AI Chatbot Case,” Forbes, 19 February 2024

2 Garcia; “What Air Canada Lost”

3 ACA, “Financial Services Firms Lag in AI Governance and Compliance Readiness, Survey Reveals,” 29 October 2024,

4 ACA, “Financial Services Firms”

5 Domin, H.; “AI Governance Trends: How Regulation, Collaboration and Skills Demand Are Shaping the Industry,” World Economic Forum, September 2024

6 Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation [GDPR])

7 European Parliament, “EU AI Act: First Regulation on Artificial Intelligence,” European Union, 14 June 2023

8 S.B. 149, Utah, USA, 2024

9 National Institute of Standards and Technology, AI Risk Management Framework, USA, 2023

10 International Organization for Standardization (ISO) and International Electrotechnical Commission (IEC), ISO/IEC 42001:2023 Information technology—Artificial intelligence — Management system, 2023

11 OECD.AI, “OECD AI Principles Overview”

KEVIN M. ALVERO | CISA, CDPSE, CFE

Is chief compliance officer at Integral Ad Science (IAS). He leads the company’s regulatory and industry standards compliance initiatives, spanning its global ad verification products and services. Additionally, he oversees IAS’s AI governance initiatives.

RAMIN KOUZEHKANANI

Is the chief information and innovation officer at Hillsborough County Government. Previously he was a senior advisor to the acting secretary at the Administration for Children and Families at the United States Department of Health and Human Services. He is also a former acting deputy secretary and assistant secretary, and chief information officer (CIO), at the US State of Florida Department of Children and Families.