When Corporate Wisdom Meets Artificial Intelligence

Just a few years ago, it was difficult to imagine professionals across nearly every industry relying on one technology to draft legal arguments, generate code, summarize documents, automate workflows, and make clinical decisions. But today, artificial intelligence (AI) is embedded directly into platforms, pipelines, and systems. Recent surveys show that 88% of organizations use AI in at least one business function, with many moving toward broader enterprise deployment.1

Today, artificial intelligence (AI) is embedded directly into platforms, pipelines, and systems.Despite this rapid adoption, understanding of the dangers of AI remains uneven. Many users and business leaders continue to view these systems primarily as productivity accelerators, underestimating their potential to introduce new types of risk. Risk management practices often lag behind deployment, leaving gaps in areas such as data privacy, access control, and regulatory compliance.2

Unfortunately, this is a common pattern for most new technologies. Those who remember the advent of smartphones, 3D printing, or the Internet of Things (IoT) may assume the emergence of AI is on par with those breakthroughs. But it is more akin to the creation of the internet: a blaze of innovation engulfing every industry.

As a result, organizations are beginning to recognize that effective AI governance requires integrating risk management into AI design, deployment, monitoring, and life cycle controls. The central challenge facing enterprises is how to adopt AI responsibly, preserving trust and accountability.3

The Current State of Artificial Intelligence

Despite AI's ability to mimic human thought patterns and speech, none of it is sentient. Most of what is commonly referred to as AI is actually comprised of machine learning (ML) techniques or large language models (LLMs).

LLMs are trained to generate output based on statistical pattern recognition rather than comprehension or intent. For example, ChatGPT was trained on information derived from a mixture of licensed data, data created by human trainers, and publicly available data and refined using reinforcement learning from human feedback (RLHF) to improve response quality. Google’s Bard (now Gemini) was trained in similar ways but also draws its information from the internet.4 The techniques used to develop these systems continue to raise unresolved questions regarding the origin, licensing, and reliability of the data used to train AI-enabled tools.

Large-scale research initiatives such as NVIDIA’s Megatron-LM have demonstrated that advances in distributed training and model parallelism could dramatically scale model capabilities, while simultaneously intensifying concerns related to data provenance, transparency, and trustworthiness.5

While a small number of high-profile companies like OpenAI initially captured the media spotlight, the broader AI ecosystem has rapidly diversified. Startups and platform such as ChatSonic,6 Jasper,7 and Wordtune8 have reinforced the view of AI as a new class of functional technology that can be embedded across workflows. These systems rely on generative pretraining informed by both public and private sector data and are reshaping how users interact with information, content creation, and decision support.

Generative AI (GenAI) is no longer confined to standalone interfaces. Systems such as Google’s Gemini are designed to function as personal assistants and are capable of tasks ranging from scheduling and research to content synthesis and planning.9 Similarly, with the introduction of Copilot, AI has been integrated directly into Microsoft 365 applications, including Word, Excel, PowerPoint, and Outlook.10

The speed of AI evolution has dazzled even the most seasoned of tech veterans. Nearly every week brings a new pronouncement on AI's advantages, dangers, limitations, or potential, with often contradictory conclusions. It is not a surprise that many business leaders have opted to wait for the AI dust to settle before designing a formal business strategy. But while this may seem like the safest path, a delay carries a risk of its own. Employees using unsanctioned GenAI tools has continued inside organizations without centralized visibility or control.11

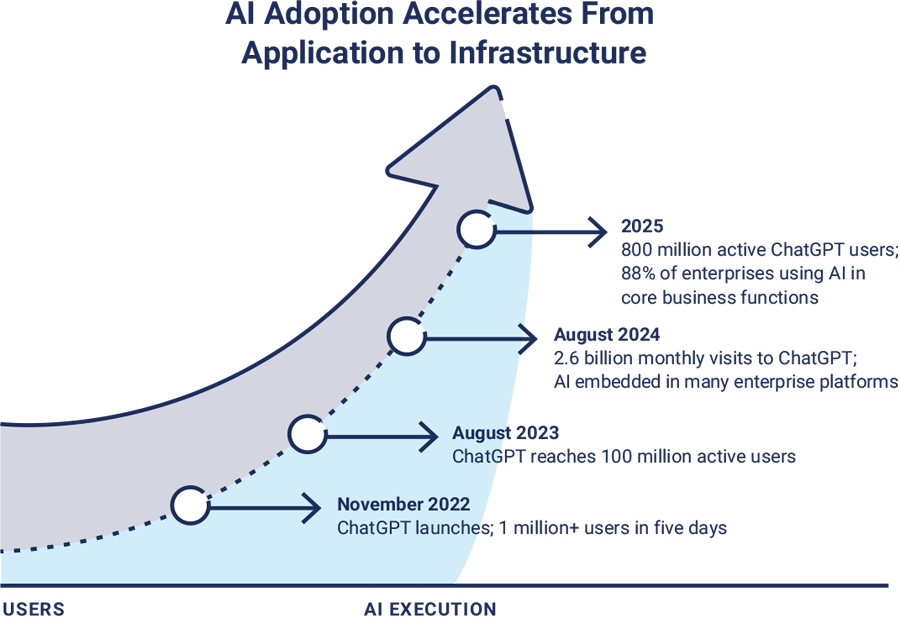

As illustrated in figure 1, AI adoption has progressed beyond rapid consumer adoption into an enterprise execution model.12

Figure 1: AI Adoption and Acceleration

Regardless of how the landscape continues to evolve, it is clear that AI is now a durable component of enterprise infrastructure, and business leaders should assume that AI is already in use within their organizations.

The safest and most responsible path forward is not deferral, but deliberate adaptation that is grounded in visibility, governance, and risk-aware deployment.

To AI or Not to AI

Some companies, such as Stack Overflow, Samsung, Apple, JPMorgan Chase, and Verizon, initially attempted to ban or restrict the use of GenAI tools, citing concerns over data leaks, hallucinations, and intellectual property exposure.13 At the time, this was widely viewed as a prudent response to an unfamiliar and quickly evolving technology.

However, such bans have become difficult to enforce. AI tools continue to proliferate through personal accounts, browser extensions, and third-party integrations. Employees increasingly rely on AI in much the same way they rely on email, spreadsheets, and collaboration platforms. This phenomenon, now commonly referred to as “shadow AI,” has introduced its own category of unmanaged risk.14

Ignoring AI can also leave an organization at a competitive disadvantage, as employees, partners, distributors, and competitors may benefit from it. Even if AI-enabled tools are not used, ignoring this technology can leave an enterprise more vulnerable to risk, such as obsolescence and less access to top talent.

However, unrestrained adoption presents an opposing danger. Misconfigured permissions, insufficient oversight, and unclear accountability can allow AI-enabled actions to propagate across systems and datasets far more rapidly than traditional security and risk frameworks were designed to manage.

Misconfigured permissions, insufficient oversight, and unclear accountability can allow AI-enabled actions to propagate across systems and datasets far more rapidly than traditional security and risk frameworks were designed to manage.For this reason, senior leaders, if they wish to utilize AI technologies, will need to ensure the right infrastructure and governance processes are in place. To accurately understand the vulnerabilities and advantages AI carries, leaders must conduct a thorough risk impact analysis that accounts for the current uncertainty of the AI landscape and its future power.

Identifying AI Risk and Reward

AI presents organizations with both unprecedented opportunity and unprecedented risk. Its ability to accelerate productivity, automate complex tasks, and augment human decision making has the potential to reshape nearly every industry.

However, along with improved productivity comes increased risk and new threats. Organizations have to balance the risk with the rewards.

Leaders must take four important steps to maximize AI value while installing appropriate and effective guardrails:

- Identify AI benefits.

- Identify AI risk.

- Adopt a continuous risk management approach.

- Implement appropriate AI security protocols.

If business leaders are able to follow these steps, they are much more likely to strike a good balance of risk versus reward as AI-enabled tools and processes are leveraged in their enterprises.

Conducting an AI Benefit Analysis

Organizations are using AI to streamline administrative processes, accelerate research and development, enhance customer engagement, and enable new products and services. This advancement in technology efficiency is allowing employees to focus more on strategic processes rather than repetitive tasks; however, organizations need safeguards in place to ensure the accuracy of the output.

In recent years, the pace of competition has increased. Markets that once changed slowly can now be disrupted in months by AI-enabled competitors with minimal staffing and rapid deployment cycles. As a result, a meaningful benefit analysis must extend beyond short-term productivity gains to include long-term strategic positioning, workforce implications, regulatory exposure, and organizational resilience.15

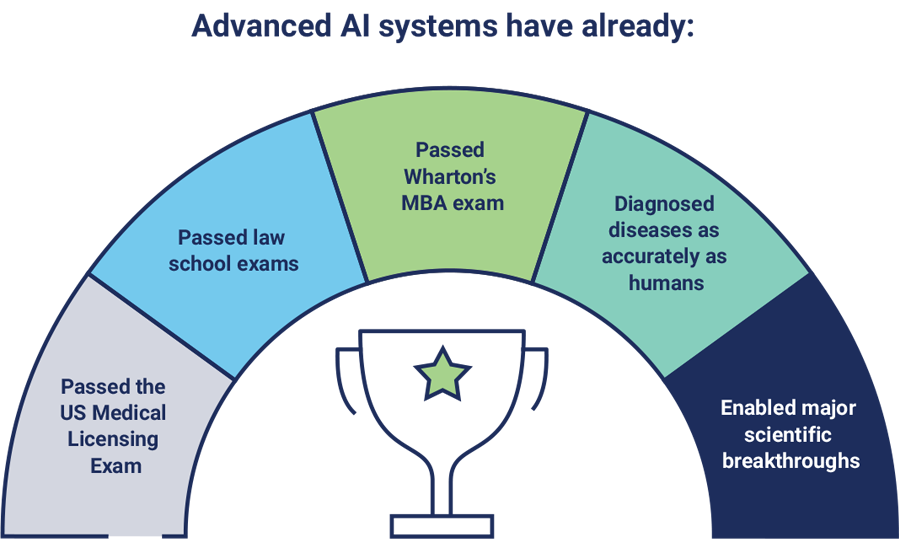

Enterprise titans may find that their AI-enabled competition is smarter, faster, and more lethal than ever before. Figure 2 contains some sample use cases.16

Figure 2: AI Insecurity Complex—Is AI Smarter Than We Are?

Use of AI Toughens Competition

Company A is an industry leader that has dominated its market for 10 years on the strength of one flagship product. The CEO feels secure in the company’s position, as competitive intelligence assures her that no other company’s research and development approaches the functionality of Company A’s product. Then an upstart named Company B uses AI to develop research, design a groundbreaking product, create pricing models and marketing plans, launch a new website and populate online sales channels—all within two weeks, with minimal workforce.

Within just two staff meetings, Company A’s product becomes viewed as outdated, its customer base decamps for Company B, and profits begin to plunge. Other upstarts begin to use AI to dethrone Company B. The market shifts radically within a few months, with Company A fighting and failing to remain relevant.

To conduct an exhaustive benefit analysis of any technology, leaders should look beyond simplistic advantages and revisit the foundational ethos of their companies. Some considerations include:

- What does the company do, and why do they do it?

- How do they do it?

- How are the company’s competitors leveraging AI tools?

- What tools and talents do they rely on, and what are their metrics for success?

- How much will investment in AI cost, and how much return on investment does it deliver?

- How confident is the company that its security protocols and mechanisms will help ensure data quality, integrity, and confidentiality (e.g., intellectual property, personal identifiable information [PII])?

- How might the company’s current strategy and objectives evolve when AI removes significant cost and talent barriers?

- What could staff and partners bring to the AI innovation table?

The answers to these questions will provide a road map that connects AI use cases to the company’s mission and goals.

Types of AI Risk

In earlier stages of AI adoption, risk was framed as hypothetical or future oriented. However, AI-related failures are increasingly observable in the real world, affecting enterprises, governments, and critical infrastructure. Risk associated with AI extends far beyond technical performance issues and isolated security vulnerabilities—AI can exacerbate any existing issues, such as a lack of quality control or poor data integrity, and may introduce new ones.

Societal Risk

As AI continues to influence public discourse, economic systems, and institutional trust, its societal impact has intensified. AI models have enabled the production of highly convincing text, audio, and video content, resulting in the spread of misinformation and disinformation on a global scale.17

AI models have enabled the production of highly convincing text, audio, and video content, resulting in the spread of misinformation and disinformation on a global scale.Misinformation has grown tremendously due to social media and AI. In fact, the manipulation of information is intentional. Multiple national security organizations and international bodies have identified manipulated content as a material threat to social stability, democratic processes, and public trust. Now more than ever before, the public should be able to rely on the accuracy and completeness of information. However, the risk is unlike earlier forms of digital misinformation, as modern AI-driven campaigns can adapt in real time, test narrative effectiveness, and optimize messaging autonomously.18

In 2023, for example, a fabricated AI-generated image depicting an explosion near the Pentagon circulated on social media and briefly impacted financial markets, demonstrating how synthetic content can trigger real-world consequences before verification mechanisms can intervene.19 Since then, synthetic audio and video impersonations (called “deepfakes”) of executives, journalists, and public officials have become increasingly common.

Societal risk is further compounded by economic disruption. According to a 2025 report published by the World Economic Forum, AI is expected to displace approximately 92 million jobs.20 This disruption introduces not only economic risk, but organizational instability, as enterprises struggle to reskill employees and redefine roles.21

Intellectual Property Risk

AI introduces new pathways for the erosion and leakage of intellectual property. Employees frequently input proprietary information, trade secrets, internal analyses, or confidential communications into AI systems without understanding how that data may be retained, reused, or exposed. Once proprietary information is introduced into external models or improperly governed internal systems, recovery is often impossible.

In 2023, Samsung disclosed that employees inadvertently leaked sensitive internal source code and confidential data by using ChatGPT for work-related tasks.22 This risk is magnified by deeply integrated enterprise AI platforms. Tools such as Microsoft 365 Copilot aggregate data across email, chat platforms, document repositories, and internal systems, increasing the likelihood that sensitive information could be shared with unauthorized users.23

Malicious insider behavior further amplifies this risk. Disgruntled employees may intentionally feed damaging or confidential information into AI tools, resulting in reputational harm or loss of legal protections. Improper disclosure of intellectual property can invalidate patents or trade secret protections, which depend on security controls to preserve confidentiality under applicable laws.

In addition, unresolved legal questions around training data, derivative works, and ownership of AI-generated output continue to create uncertainty. Courts and regulators increasingly treat AI-generated content as a source of ongoing legal exposure rather than a settled compliance issue, particularly in jurisdictions with evolving copyright and data protection regimes.24

Invalid Ownership

Any company using AI to create output—such as a marketing tagline—must guarantee it is truly original intellectual property and not derivative of another person's work.25 If not, these businesses may discover their ownership of the new assets is invalid. Further, the US Copyright Office has rejected copyrights for some AI-generated images,26 depending on whether they were created from text prompts or if they reflect the creator's "own mental conception."

Any company using AI to create output—such as a marketing tagline—must guarantee it is truly original intellectual property and not derivative of another person's work.Invalid ownership could also occur if, for example, a writer copy and pastes a link to an admirable piece of competitor content as inspiration into an AI tool and is given back half-plagiarized content.27 Invalid ownership claims regarding AI can ultimately lead to legal disputes and adversely impact an enterprise's reputation.

Cybersecurity and Resiliency Impact

AI has fundamentally altered the cybersecurity threat landscape. With minimal prompting, individuals with limited technical expertise can generate malware and phishing attacks or gather information without authorization, dramatically lowering the barrier to entry for sophisticated cybercrime.

New today is the emergence of agentic and autonomous AI threats. Agent-based AI systems can independently plan, sequence, and execute multistep cyberoperations, including lateral movement, privilege escalation, persistence, and exfiltration. When combined with stolen credentials or weak internal controls, these systems can operate continuously without human oversight, compressing detection and response timelines beyond traditional security capabilities.28

Agent-based AI systems can independently plan, sequence, and execute multistep cyberoperations, including lateral movement, privilege escalation, persistence, and exfiltration.Model integrity has also become a security concern. AI systems may be compromised through data poisoning, model inversion, prompt injection, or behavioral manipulation, resulting in biased outputs, covert data leakage, or degraded decision making without obvious system failure.29 Supply chain risk is similarly amplified. Organizations increasingly rely on third-party models, application programming interfaces (APIs), plugins, and fine-tuned systems. A compromised upstream dependency can introduce systemic risk across downstream deployments, creating cascading failures that traditional vendor risk assessments may not detect.30

Industry data reflects this shift. Darktrace and Zscaler have documented sharp increases in AI-assisted phishing, CEO fraud via synthetic voice impersonation, and automated attack campaigns.31 As a result, a majority of IT professionals expect to see successful AI-assisted cyberattacks, reflecting widespread concern across the security community.

Resilience planning must therefore expand. Business continuity and incident response strategies must now account for corrupted models, poisoned data, autonomous misbehavior, and AI-driven automation acting on faulty assumptions.

Weak Internal Permission Structures

One of the first opportunities many business leaders identified in AI was the optimization of internal systems, like enterprise resource planning or pricing models and inventory systems. They did not foresee that after inputting information into these systems, employees were then able to ask the same tool for their colleagues' salaries and other sensitive information. This is because AI systems can rapidly aggregate, infer, and summarize sensitive information, enabling users to access data outside their authorized role, such as personnel records or confidential business metrics. While permission misconfiguration is not unique to AI, AI can dramatically expand its impact.

Specialized AI tools that leverage language models to guess or generate passwords further increase risk when internal controls are weak.32 Identity governance and least-privilege enforcement have emerged as the primary control for this risk.

Skill Gaps

Defending against AI threats requires expertise in areas such as adversarial ML, identity governance, model integrity, and AI system monitoring. For many organizations, this can translate into impacts on budgets to reskill existing employees, recruit talent, or engage specialized external partners.33

Cybersecurity experts have also continued to advocate for the development of stronger digital identity frameworks to combat fraud.34 Implementing and governing such frameworks—particularly in an environment shaped by AI-generated content and deepfakes—requires technical capabilities that often exceed those of traditional IT departments.

Overreactions

IT professionals have long observed a pattern following ransomware attacks. Businesses that ignore their cybersecurity programs are attacked, after which they panic and overspend on security tools that do not always complement each other. This reactive approach often increases complexity and leaves organizations vulnerable to subsequent attacks.

A similar pattern is emerging in response to AI-related attacks. While some enterprises react to incidents in a way that excludes AI from business processes, others may move in the other direction, overreacting favorably toward AI and placing too much trust in unvalidated AI output. Staff may find themselves tasked with impossible workloads and resort to workarounds without testing their AI output. Those untested assumptions will exacerbate quality control issues and pose a reputational risk for the organization. Balanced, deliberate governance—not reactionary extremes—is essential.35

Intended and Unintended Use

Most commercially available AI systems include ethical and safety limitations designed to limit misuse. Because of the guardrails built into a tool like ChatGPT, for example, someone who asks AI how to commit a violent act may be advised to see a therapist. However, in recent years, it has become clear that these guardrails are neither universal nor foolproof.

Users have demonstrated the ability to bypass safeguards through prompt manipulation, system chaining, or the use of intentionally unregulated tools such as WormGPT.36 These techniques have increased the sophistication of business email compromise (BEC), fraud, and impersonation attacks, as well as access to harmful content. The gap between intended and actual use continues to widen as AI systems become more capable and modular.

Data Integrity

GenAI programs can produce outputs that appear confident, coherent, and authoritative, even when they are factually incorrect. This phenomenon—commonly referred to as hallucination—remains unresolved today. Different models may produce conflicting answers to identical questions, often without citations or transparency regarding the source material.37

GenAI programs can produce outputs that appear confident, coherent, and authoritative, even when they are factually incorrect. This phenomenon—commonly referred to as hallucination—remains unresolved today.As a result, organizations are increasingly recognizing the need for human in the loop (HITL) controls, particularly for high-impact decisions involving healthcare, legal judgments, surveillance, or criminal justice. In these contexts, AI systems may assist by generating recommendations, but final accountability must remain with human decision-makers.

Data integrity challenges are further compounded by limited transparency into training data, model provenance, and bias. Detecting plagiarism, assessing the authority of sources, and identifying systemic bias within AI-generated outputs remains difficult. This challenge was highlighted in a 2023 Manhattan federal court case in which attorneys submitted AI-generated legal citations that did not exist.38

Without proper data integrity measures in place, employees using AI tools may produce incorrect or misleading results, which can have serious consequences for both the enterprise and its data quality.

Liability

Questions of liability are becoming increasingly prominent as it pertains to AI. For example, if an AI-driven decision results in financial loss, discrimination, or physical harm, where does accountability reside?39 If an AI chatbot makes inappropriate remarks to minors, who is liable? When an AI system produces harmful, discriminatory, or unlawful outcomes, responsibility could be distributed across developers, deployers, vendors, and users.

This challenge is particularly acute in the context of job automation. When a human employee makes an error, corrective action is typically directed at the individual. When an AI system fails, responsibility shifts to the organization. As AI replaces or augments human roles, enterprises must recognize that liability does not disappear—it consolidates.

When a human employee makes an error, corrective action is typically directed at the individual. When an AI system fails, responsibility shifts to the organization. As AI replaces or augments human roles, enterprises must recognize that liability does not disappear—it consolidates.Today, regulators and courts are increasingly signaling that organizations that deploy AI will be held accountable not only for outcomes, but for the adequacy of governance, oversight, and risk controls in place at the time of deployment.40

Looking Ahead: Quantum-Enabled AI and the Next Risk Horizon

AI capabilities are advancing at a pace that shows no sign of slowing. Beyond incremental improvements in model size and performance, the next phase of AI evolution will be shaped by the convergence of agentic AI systems, synthetic intelligences, and quantum computing.

Agentic AI systems are already capable of planning, executing, and adapting tasks across digital environments with limited human intervention. Synthetic intelligences—systems trained increasingly on AI-generated data rather than direct human input—are becoming more common as a means of scaling capability and reducing cost. Quantum computing, while still emerging, represents a fundamentally different computational paradigm.41 While each of these domains presents independent risk, their combination also introduces a new type of systemic exposure that organizations must begin preparing for now.

Why Quantum Changes the Equation

Most modern digital security relies on public-key cryptography, which protects data in transit, authenticates users and systems, and underpins everything from secure web traffic to software updates and digital identities. These cryptographic systems are considered secure because breaking them with classical computers would take an impractical amount of time.

Quantum computers change that assumption. Algorithms such as Shor’s algorithm theoretically enable sufficiently powerful quantum systems to break widely used cryptographic schemes, including RSA and elliptic curve cryptography. While large-scale, fault-tolerant quantum computers are not yet available, the risk is not hypothetical. Data intercepted and stored today can be decrypted in the future once quantum capability matures, a threat commonly referred to as “harvest now, decrypt later.”42 For organizations deploying AI systems that process sensitive data, make automated decisions, or operate across distributed environments, this risk is amplified.

Data intercepted and stored today can be decrypted in the future once quantum capability matures, a threat commonly referred to as “harvest now, decrypt later.”What Is Post-Quantum Cryptography (PQC)?

Post-quantum cryptography (PQC) refers to cryptographic algorithms designed to remain secure against both classical and quantum attacks. Unlike quantum key distribution, which requires specialized hardware, PQC algorithms are intended to run on existing systems and networks, making them practical for broad deployment.43

In recent years, the US National Institute of Standards and Technology (NIST) finalized its first set of PQC standards, marking a critical transition point from research to implementation. Governments and regulators are increasingly signaling that organizations will be expected to begin planning and migrating to quantum-resistant cryptography, particularly for systems with long data retention periods or national security relevance.44

AI systems intensify the urgency of PQC adoption for three reasons:

- Longevity of data—AI models often retain, learn from, or depend on historical data. If that data is compromised in the future, the integrity of AI outputs and decisions may also be undermined.

- Automation at scale—Agentic AI systems can act continuously and autonomously. If cryptographic trust is broken, AI systems could propagate compromised decisions or actions faster than human oversight can intervene.

- Expanded attack surface—AI increases the number of machine-to-machine interactions, APIs, and identity relationships that depend on cryptographic assurance. Quantum risk applies across this entire surface simultaneously.

The Case for a Quantum-AI Readiness Team

Every organization deploying or planning to deploy AI at scale should establish a quantum-AI readiness capability, even if quantum computing appears far away. This does not require a large or specialized team, but it does require clear ownership, cross-functional coordination, and informed planning.

A quantum-AI readiness team should include representation from cybersecurity, enterprise architecture, risk management, legal and compliance, and AI program leadership.

Its responsibilities should include:

- Inventorying cryptographic dependencies across AI and non-AI systems

- Identifying long-lived data and systems vulnerable to future decryption

- Monitoring regulatory and standards developments related to PQC

- Coordinating phased migration plans aligned with business priorities

- Engaging external partners with expertise in quantum-safe security and AI governance

The encouraging reality is that much of the work required to prepare for post-quantum AI risk aligns with existing best practices. Improving cryptographic agility, strengthening identity management, documenting data flows, and enforcing life cycle governance can all reduce current risk while enabling a future transition.45

Quantum readiness is an extension of disciplined risk management. Organizations that begin this work early will be better positioned to adapt as quantum capabilities mature, while those that delay may find themselves facing compressed timelines and heightened exposure.

Conclusion: From Awareness to Accountability

The use of AI magnifies both opportunity and risk. Organizations that treat AI as a productivity shortcut, rather than a governed system, expose themselves to legal liability, security failures, reputational damage, and long-term strategic erosion. At the same time, excessive caution carries its own cost. Enterprises that delay engagement in hopes of regulatory clarity or technological stabilization often discover that AI adoption has already occurred informally in their organizations. In these cases, risk is not avoided, it is obscured. Enterprises must acknowledge AI’s permanence and respond with deliberate, structured governance.

Continuous risk assessment, clear accountability, ethical guardrails, and security by design enable organizations to extract value from AI while preserving trust. The emergence of PQC further underscores the need for long-term thinking. Decisions made today about data retention, cryptographic dependency, and AI system design will determine security tomorrow.

Decisions made today about data retention, cryptographic dependency, and AI system design will determine security tomorrow.Ultimately, AI risk is a leadership issue. Boards, executives, and risk professionals must move beyond awareness toward accountability. This includes assigning ownership, funding readiness efforts, engaging trusted partners, and embedding AI governance into enterprise risk management.

AI will continue to advance rapidly. The organizations that thrive will be those that build the capacity to adapt responsibly. In an era defined by speed, scale, and autonomy, preparedness is no longer optional.