In an era when the complexity and impact of cyberthreats are escalating, enterprises must rely on sophisticated models to quantify, predict, and mitigate cyberrisk. These models offer a structured approach to understanding vulnerabilities and potential losses, providing a clearer view of the risk landscape. However, to integrate these models into organizational decision making, stakeholders must trust in their accuracy, reliability, and transparency. Without a robust foundation of trust, even the most advanced models may be underutilized or disregarded altogether.

A relatively new facet of cybersecurity is the need to evaluate the cyberrisk models in use. Much of the US Federal Reserve’s guidance on model regulation is applicable to cybermodels as well, depending on their usage.1 However, simply because a model is available in the marketplace does not mean that its underlying scoring methods are sound and reliable. After all, “all models are wrong, but some are useful,” as statistician George Box is reputed to have said.2 The approach articulated here is designed to facilitate the selection of a model that is useful and reflects reality reasonably well.

By rigorously structuring trust, enterprises can better assess the reliability of cyberrisk models and encourage their wider adoption, thus enhancing resilience against cyberthreats.The structured framework for evaluating trust in cyberrisk models proposed here is grounded in the classical rhetorical principles of logos, ethos, and pathos and operationalized through the progressively elaborated decomposition of three tiers: attributes, artifacts, and evidence. By bridging logical reasoning, credibility, and emotional connection, this approach provides a holistic path to fostering trust and encouraging the effective adoption of cyberrisk models.

Trust in cyberrisk models is multifaceted, encompassing faith in the model’s outputs and confidence in the data sources, methodologies, and empirical validations underpinning these results. By rigorously structuring trust, enterprises can better assess the reliability of cyberrisk models and encourage their wider adoption, thus enhancing resilience against cyberthreats. However, engendering trust in a model involves much more than simply proving the mathematics of any underlying calculations. Trust is the product of a logical proof and the satisfaction of emotional requirements. One cannot simply “prove” trust mathematically; an iterative process is required to create trust.

Rhetorical Principles in Trust Building: Logos, Ethos, and Pathos

To fully understand the foundational elements of trust in cyberrisk models, it is valuable to draw from ancient Greek philosophy and Aristotle’s rhetorical principles, which are foundational tools for effective communication and persuasion. Aristotle introduced the concepts of logos, ethos, and pathos in his work Rhetoric, written in approximately 350 B.C.3 These three modes of persuasion have been used to analyze and develop powerful arguments in various fields, including law, literature, politics, and science. Understanding their origins and meanings provides deep insight into how they can be used to build trust, particularly in complex areas such as cyberrisk modeling, where clear communication is essential for stakeholder adoption.

Logos (Greek for "word" or "reason") refers to the logical structure of an argument. In rhetoric, logos is used to appeal to the audience’s sense of reason and logic. Aristotle emphasized the importance of providing clear, well-founded arguments that rely on evidence, data, and rational conclusions. When speakers or writers use logos, they are constructing their arguments through facts, statistics, and logical progressions. In modern communication, logos can be understood as presenting ideas in a way that appeals to the audience’s intellectual capacity. By leveraging logos, communicators can foster trust by showing that their models are based not on speculation but on rigorous, logical frameworks.

Ethos (Greek for "character") refers to the credibility or ethical appeal of a speaker or writer. Aristotle believed that for an argument to be persuasive, the person presenting it must be perceived as trustworthy and knowledgeable. Ethos is about convincing the audience that the speaker has the authority and integrity to speak on the topic. There are several ways to build ethos. One can highlight qualifications, credentials, or experience to establish credibility. Transparency and objectivity are essential for building trust. Ethical appeals involve demonstrating that the speaker or writer has the audience’s best interests at heart, is not misleading, and is open about the limitations of their work.

Pathos (Greek for "suffering" or "experience") is the emotional appeal used to persuade an audience by tapping into their emotions, values, and beliefs. Aristotle recognized that human beings are not purely rational creatures; emotions can significantly influence decision making. Pathos seeks to connect with the audience on an emotional level, creating a personal or emotional investment in the topic. Pathos can be used to build trust by sharing stories or examples that elicit empathy, concern, or confidence. In the context of cyberrisk, discussing the real-world consequences of cyberbreaches—such as financial loss, reputational damage, or customer harm—can evoke an emotional response. Citing others who have found the model helpful and framing the model’s benefits in terms of safeguarding what the audience values can be highly persuasive.

When communicating about cyberrisk models, incorporating logos, ethos, and pathos into the model’s defense ensures a well-rounded, persuasive approach.4 For example, logos ensures logical clarity. Stakeholders (both internal and external) must understand the model's structure, methodology, and rationale. Logical arguments based on sound data and evidence can clarify why the model is reliable. Ethos builds trust in the model and its creators. A credible model comes from credible sources, and emphasizing the expertise and ethical transparency of the model designers fosters confidence in the model itself. Finally, pathos recognizes that selecting a model has its own risk, and this makes the stakes personal. Actively seeking feedback from others who have used the model can reassure potential users of the validity of the designer’s claims. This emotional investment can be the final element that convinces someone to adopt a model. By combining these three rhetorical methods, model designers can ensure that their message appeals to users' rational and emotional sides, providing a balanced and compelling argument for trusting a particular cyberrisk model.

The Cyberrisk Model Trust Framework: Attributes, Artifacts, and Evidence

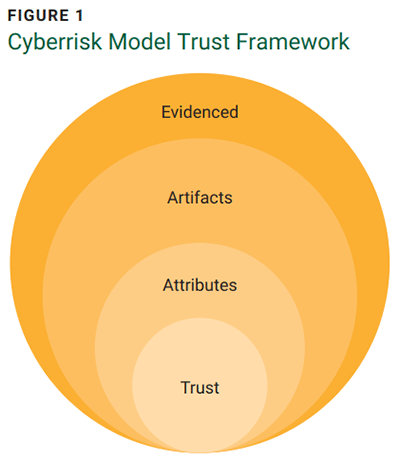

Although Aristotle’s three rhetorical principles are helpful, they provide only a general model for communication. A specific approach related to fostering trust in a cyberrisk model can be divided into three key tiers: attributes, artifacts, and evidence (figure 1). Each one offers a pathway for enterprises to assess and build confidence in the cyberrisk models they employ.

Although Aristotle’s three rhetorical principles are helpful, they provide only a general model for communication. A specific approach related to fostering trust in a cyberrisk model can be divided into three key tiers: attributes, artifacts, and evidence (figure 1). Each one offers a pathway for enterprises to assess and build confidence in the cyberrisk models they employ.

Attributes

The first tier of the framework includes four attributes: model transparency, data transparency, validation, and empiricism. These attributes are the basic qualities that inform a model’s design and function. Model and data transparency pertain to the openness of the model’s structure. Transparency allows for the evaluation of how well the model meets the objectives and users’ intended use cases. Openly presenting the model’s methodologies and calculations enables stakeholders to scrutinize and understand its underlying logic. These first two attributes help satisfy model users' logos needs—namely, ensuring that the model is logically sound and fit for its intended purpose.

The third attribute, validation, is designed to satisfy the ethos needs of model users. Validation focuses on performing the necessary tests to ensure that the model operates as stated in its documentation. Typically, model designers test their models and document this testing. This provides a first-party perspective of whether the model operates as intended and as specified in its design documentation. However, high-quality models also undergo evaluation by third parties. Much like an external audit, this assures that the model outputs are valid and deserve one’s trust.

Finally, empiricism reflects the trust that others have put in the model and how that trust might be transferred to a new model user. This empirical trust is designed to appeal to users’ pathos needs. In many endeavors, individuals look to their peers for advice. Likewise, in cyberrisk models, it helps to be reassured that others have found a particular model to be reliable. Additional empirical evidence exists when a best-in-class enterprise, such as a large enterprise in a certain industry, has adopted the model.

Artifacts

Artifacts

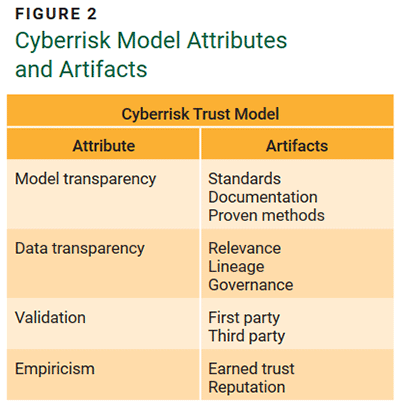

Artifacts represent the tangible elements of the trust attributes (figure 2). They answer the question, “What is meant by x?”

Model Transparency

When assessing a model’s transparency, it is helpful to evaluate the documentation associated with the model. Ideally, the model documentation should serve as high-level guidance that could be used to recreate the model. Certain details might require someone with the skill to make interpolations, but generally, the documentation should provide insight into how the model calculations are performed. There may be an explicit or implicit reference to the standards used in the model design. For example, the documentation might say that risk is calculated using a Factor Analysis of Information Risk (FAIR) or FAIR-like model, the loss distribution approach (LDA), or some other relevant standard.5 In addition, the documentation should articulate the methods used; for cyberrisk models, the gold standard is the Monte Carlo method, Bayesian networks, or similar methods for forecasting.6

Data Transparency

Closely related to model transparency is data transparency. Here, the focus shifts slightly from how the model is being managed to what the model is being fed (data). In the case of a cyberrisk model, if it is leveraging automated data solutions, one should be able to evaluate those sources by looking for several key artifacts. This means looking at how relevant the data is to the organization. Generally, cyberrisk models operate in two modalities: Users bring their own data or they leverage the model designer's data (although some models allow for a combined approach). Regardless of which modality is used, the data must be evaluated to determine whether it is relevant to the enterprise. Model vendors typically use some sort of peer methodology that can articulate the data’s relevance. They are likely to use three key variables: industry, geography, and size. Proxy data is often used (e.g., standard industry groupings such as the North American Industry Classification System [NAICS] or Standard Industrial Classification [SIC]);7 geographic groupings based on country, state, or region; and size groupings determined by revenue or employee count).8 It should be possible to narrow these categories to a reasonable level given a specific use case for model usage.

Once the relevance of the data is determined, it is helpful to know where the data comes from—its lineage. This can give insights into any potential blind spots and biases in the data sets. Some modelers build their own data sets using, for example, external scan engines. Others have contractual relationships with public data brokers and private data dealers. Each approach has pros and cons and may signal different strengths and weaknesses, depending on the specific use case.

The final element is governance, which relates to how the data is managed. If the model requires the user to provide the data, processes must be established to ensure that the model is fed accurate and proper data. For a vendor-provided data model, users must evaluate how the vendors accomplish the same. In all cases, the governance model should create processes to keep track of the data’s provenance.

Validation

Model validation can be conducted internally (first party), externally (third party), or by a combination of the two. Model users are responsible for performing due diligence on the model’s design and operations by reviewing relevant artifacts. This process should include a risk assessment of the model itself—a meta-risk analysis that considers the potential consequences of misinformed decisions stemming from flaws in the model’s design or function. This assessment also examines how data quality impacts model accuracy. For many enterprises, the greatest risk is the possibility that their risk models may be incorrect.9

First-party evaluations are necessary for all models, whether they are self-built or acquired from a vendor. However, when a model is purchased from a vendor, the vendor should have completed the third-party model validation. This is equivalent to an external audit. There should be evidence that this evaluation has been done, either by sharing the details of the evaluation or by providing a summary statement of attestation. Be on the lookout for qualified statements and scope limitations when assessing a model’s suitability.

Empiricism

Finally, the empirical side of the model has its own valuable artifacts. Over time, as a model is used, it builds trust through a proven track record within the enterprise. However, this earned trust is not available when implementing a new model. In such cases, a “trust proxy” can be used to assess the model’s reputation. This might include reviewing case studies from other enterprises that use the model, speaking directly with these users, or considering endorsements from high-trust entities such as an insurance underwriter or bank regulator. Insights from long-term users are especially valuable, as they can share experiences and identify the model’s limitations in a variety of scenarios.

Evidence and Scoring

For each artifact, users should gather evidence demonstrating the model’s adherence to each tenet. This includes collecting documentation of the model’s design and operations, among other relevant materials. Look for evidence of testing, failures, how risk is calculated, and whether those methods are commonly accepted in the industry. Ask for and evaluate traceability documentation to see how inputs are used in model calculations. Request any internal or external model calibration and testing results used to validate the model. Evaluate internal usage of the model and note any open issues or complaints from model users. Also, speak with external model users to evaluate their experiences.

Model risk evaluation for financial services enterprises is described in the US Federal Reserve SR 11-7 standard, and those in that industry should follow it meticulously.10 However, for others, a simple scoring system can be established to evaluate a model's reliability. Each evaluation should be performed with due consideration of the specific use case being evaluated. For example, is the model useful for evaluating cyberrisk and reporting to the board, making insurance purchases, determining budgetary allocations, etc.?

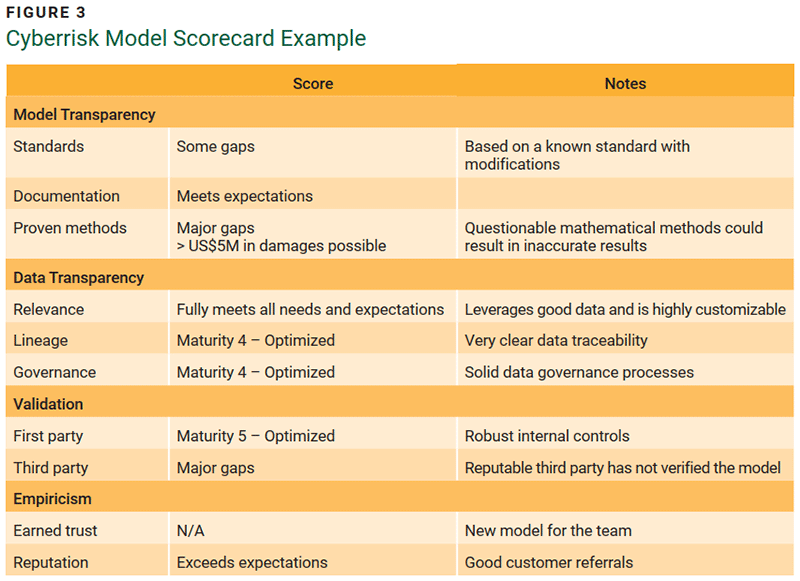

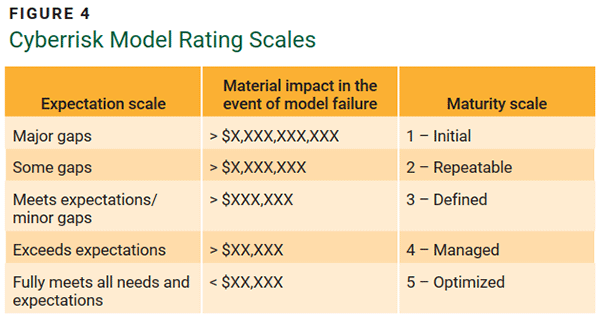

Figure 3 illustrates the described model trust scoring parameters with example scoring and notes using the qualitative and quantitative rating scales illustrated in figure 4.

By evaluating each artifact, an attribute score (e.g., an average or sum) can be derived, leading to an overall score of model trust. Typically, enterprises have established risk tiers that classify models as high, medium, or low risk, given their baseline usage (e.g., trading algorithms for financial services enterprises tend to be high risk), and a residual risk assessment to reflect how well designed and operationalized the model is.

Conclusion

Enterprises depend on advanced cyberrisk models to assess, quantify, and manage threats in today's evolving cybersecurity landscape. However, the effectiveness of these models relies heavily on stakeholders’ trust in their accuracy and reliability. A comprehensive framework for evaluating and fostering trust in cyberrisk models, drawing on Aristotle’s rhetorical principles of logos, ethos, and pathos, can address the multifaceted aspects of model validation. By integrating logical rigor, credibility, and emotional appeal, the framework helps establish confidence in model outputs. The proposed framework categorizes trust factors into three tiers—attributes, artifacts, and evidence—each with specific criteria for assessing a model’s transparency, validation, and empirical support. This structured approach empowers enterprises to make informed decisions, enhances resilience against cyberthreats, and promotes the broader adoption of reliable risk models across sectors.

Endnotes

1 Board of Governors of the Federal Reserve System, Supervision and Regulation Letter SR 11-7: Guidance on Model Risk Management, USA, 4 April 2011

2 Box, G.E.P.; “Science and Statistics,” Journal of the American Statistical Association, vol. 71, iss. 356, 1976, p. 791-799

3 Aristotle, The Art of Rhetoric, trans. W. Rhys Roberts, Dover Publications, USA, 2004

4 Freund, J.; “Communicating Technology Risk to Nontechnical People,” ISACA® Journal, vol. 3, 2020

5 Freund, J.; Jones, J.; Measuring and Managing Information Risk: A FAIR Approach, Elsevier, USA, 2014; Alexander, C.; Sheedy, E.; “The Professional Risk Managers’ Guide to the Loss Distribution Approach,” in C. Alexander (Ed.), Operational Risk: Regulation, Analysis, and Management, Prentice Hall, USA, 2005

6 Vose, D.; Risk Analysis: A Quantitative Guide, 3rd ed., Wiley, USA, 2008

7 US Census Bureau, “North American Industry Classification System (NAICS) Manual,” USA, 2022; US Department of Labor, “Standard Industrial Classification Manual,” USA, 1987

8 Provost, F.; Fawcett, T.; Data Science for Business: What You Need to Know About Data Mining and Data-Analytic Thinking, O’Reilly Media, USA, 2013

9 Hubbard, D.W.; The Failure of Risk Management: Why It’s Broken and How to Fix It, Wiley, USA, 2009

10 Board of Governors of the Federal Reserve System, Supervision and Regulation

JACK FREUND | PH.D., CISA, CISM, CRISC, CGEIT, CDPSE

Is an experienced cybersecurity and governance, risk, and compliance (GRC) professional. He is the coauthor of the foundational cyberrisk quantification (CRQ) book using the FAIR standard, which was inducted into the Cybersecurity Canon in 2016. He was named an ISSA Distinguished Fellow, FAIR Institute Fellow, IAPP Fellow of Information Privacy, ISC2 2020 Global Achievement Awardee, and ISACA’s 2018 John W. Lainhart IV Common Body of Knowledge Award recipient. Freund also serves on the board of the ISSA Education Foundation.